Building a Suzuki Omnichord Emulator (part 2)

In the first part of this series, I discussed the choice of the technology stack and latency optimization.

The goal is to create a Suzuki Omnichord emulator for the Android platform.

In this second part, let's examine how I managed to "clone" the characteristic sound of the Omnichord chords.

As I wrote before, this article series won't be tutorials but an analysis of my design choices and how I solved the problems I encountered along the way.

Although these samples came from different models, by comparing them, I’ve gained a good understanding of this instrument.

I must thank those who made these samples freely available:

Matt Lim for the OM-27: github link

Benjamin Dehli for the OM-84: github link

I have to admit that initially, I had no idea how to recreate these sounds.

I could have just played the samples and essentially created a large sampler, but I decided to proceed differently for two reasons:

- the goal is to sell this app on the Play Store, and I don’t want to get sued by Suzuki for using copyrighted material;

- the world of software sound synthesis is new to me, and I’d like to give it a try.

The chord sound was the most challenging aspect, far more than I had anticipated.

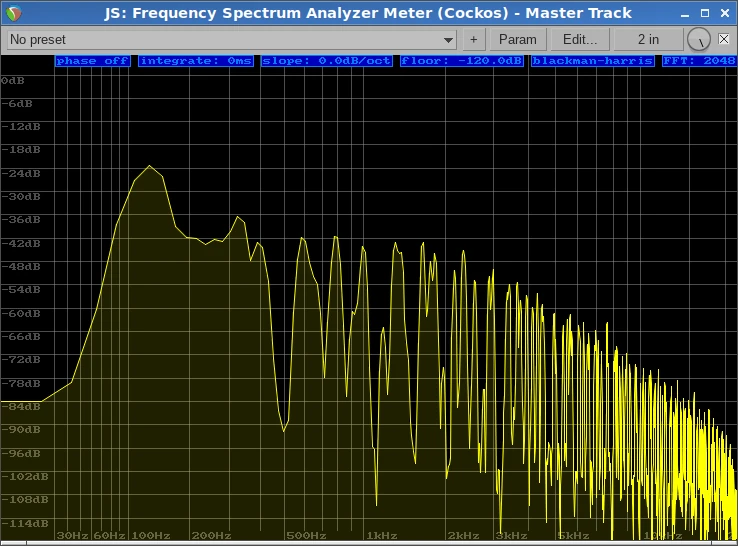

Starting with a C Major sample, I tried to analyze the harmonics with Reaper’s Frequency Spectrum Analyzer Meter. At first, all I saw was an impossible mess to untangle.

Reaper needs no introduction, but for the few who don’t know it, it’s one of the best DAWs available.

So, as the great Ash Williams would say, we can take these harmonics... with science!

First Attempt: Additive Synthesis

The first attempt was to use the Fast Fourier Transform to extract information about the harmonics and then recreate them with additive synthesis.For the FFT, I used the audio editor Audacity, which includes a convenient function that allows to perform the analysis and export a txt file containing the entire harmonic spectrum.

After getting this txt file, I created a parser with PHP to extract only the peak frequencies and create a basic data structure that linked these frequencies to their volume.

Yes, for these kinds of non-critical operations like small parsers, I use the widely criticized PHP, which in these cases is very useful.

Since I was experimenting and needed to be fast, I didn’t immediately implement everything in C++. Instead, I used a powerful tool also available in Reaper: I created a Jesusonic (JSFX) script to read these frequencies and synthesize them additively.

JSFX is a wonderful scripting language created by the authors of Reaper that allowed me to build these prototypes quite easily.

The approach initially worked, but with several problems.

First problem: the sound was a static, lifeless imitation of the original.

I partially solved this problem by randomizing the initial phase for each harmonic, but it still wasn’t enough.

Second problem: extremely high CPU consumption.

Initially, I was synthesizing something like 300 harmonics, and for each harmonic, the CPU had to process a cosine function.

So, for each sample, it was about 300 cosine functions. Every second there were 48000 samples, which meant about 14.400.000 cosine functions per second.

This was clearly not sustainable. I tried to modify the PHP parser to filter only the harmonics above a certain threshold: with a threshold of -60db, I managed to reduce the number of harmonics to about 30.

With 30 harmonics, the sound was still there and recognizable, but at this point, it completely lacked high frequencies (from about 5000hz upwards). And despite the drastic reduction in operations, CPU consumption was still very high.

Then I realized that the other Omnichord chords are not obtained by pitching a base chord to different keys; each chord follows its own rules and has different voicings.

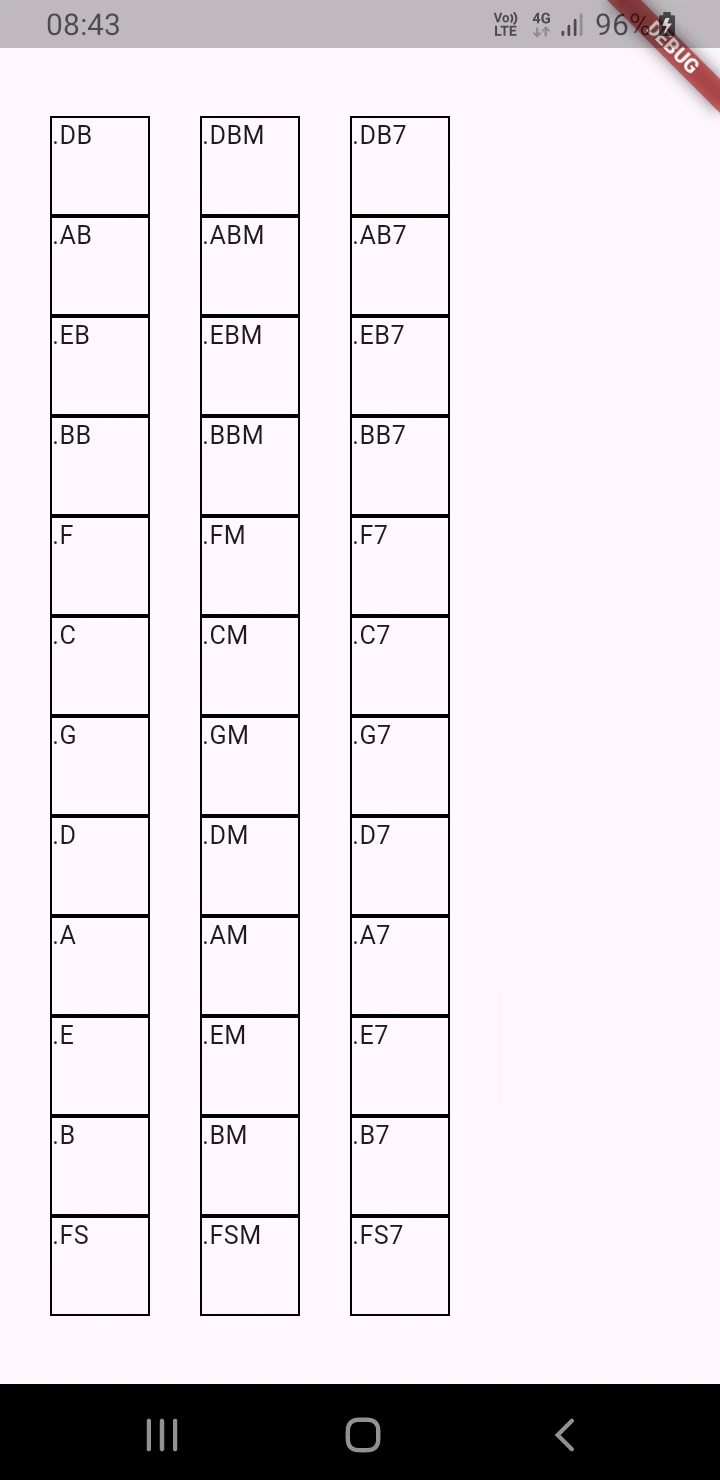

That would have required me to extract the harmonics for all 84 chord combinations obtainable with the Omnichord, which would have been insane, considering that the result still didn’t satisfy me much.

Clearly, this couldn’t be the right path.

The Breakthrough: Sound Modeling, Soft Clipping, and Subtractive Synthesis

At this point, armed with Reaper and that underrated gem called ReaSynth, I tried to model the sound of each individual note on four different tracks.After days of failed attempts, I finally found a satisfactory combination of waveform and filters.

I won't write the "recipe" here, but as others have already written on other blogs, the starting sound is the square wave. Then, there's a combination of high-pass and low-pass filters to get the final sound.

In the digital domain, generating square waves means you need to control the extreme aliasing they can create.

I tried several approaches, avoiding oversampling which could be too demanding for the limited processing power of smartphones (at least for my old Samsung J6).

Soft clipping was the key to keeping the aliasing under control. I didn't eliminate it completely because, judging by the samples I used as a reference, a bit of aliasing is also present in the original instrument (assuming this aliasing was not created by the audio interface during recording).

In short, I managed to get a sound that is nearly identical from the original.

Armed with patience, I managed to replicate the voicings of all 84 chord combinations obtainable from the OM-84.

At this point, I had to translate everything into C++. So I had to write the function that creates the soft-clipped square wave from the sinusoidal LFO I had already written, a Low Pass Filter, a High Pass Filter, and finally all the logic that combines the chords to get the famous 84 combinations.

My next step was to prototype the graphical interface in Flutter: the 36 chord buttons. It’s ugly and not final, but it’s functional!

For now, everything works wonderfully. The project is starting to take shape.

In the third part, I will talk about the most characteristic sound, the sound that makes everyone fall in love with the Omnichord: the strum plate sound.

It has been submitted and will be reviewed before being published.